In a previous blog post I have mentioned that I was not able to add my Windows 10 installation to the Grub boot menu. I have finally found a solution. Now, in my last Linux blog post I mentioned that I ultimately gave up on Linux after trying Ubuntu 20.04. Well, I could not stop thinking about it. I am on Pop!_OS again and although I did not disconnect any SSD on installation, Pop! did not detect Windows 10 and add it to Grub itself. So, I was back at where I started.

Quick recap of the setup: I have two SATA SSDs (yes, SATA, like a cave man), one with Windows 10 (the Crucial MX500) and one with Pop!_OS Linux (the Samsung 850 Evo). The bootloader for each OS is on the respective SSD.

Now, enough background, let us get to the solution!

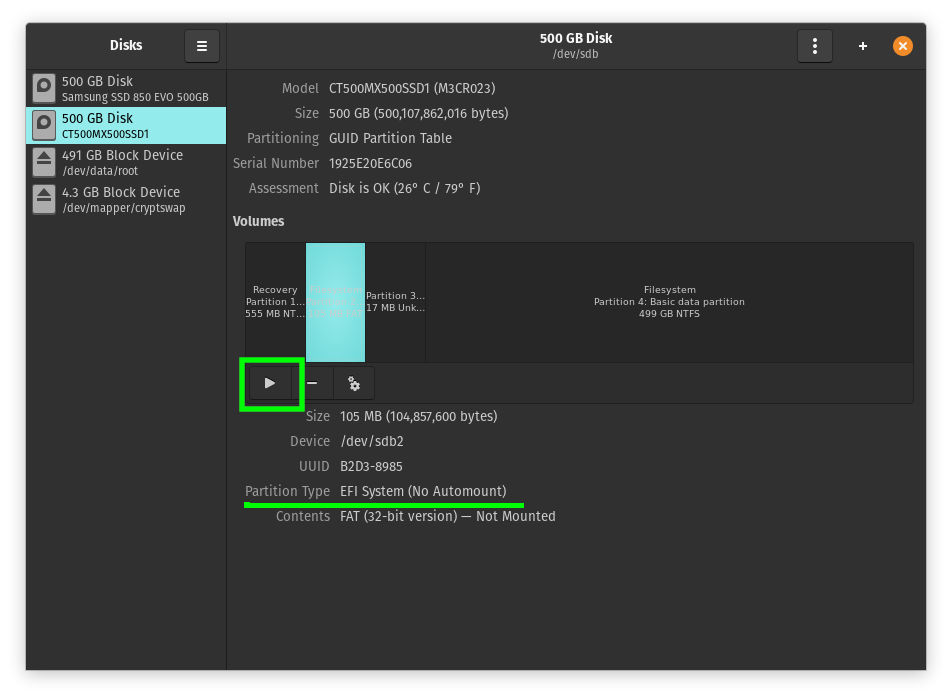

If you are CLI wizard do your thing, I will be using a convenient UI for the first step. Open “Disks” and locate the Windows 10 EFI partition. It’s around 100MB in size. Once you have found it, click the “Play” button to mount it.

The Disks utility will then display the mount point that is required in the next step.

Now, copy some Windows 10 Boot files to your Linux /boot folder. Yes,

you read that right. Sounds weird, but it did the trick.

Do this with Nautilus or use the following command (which I

recommend). Replace <mount point> with the path you got from the Disks

utility. Note that path completion does not work once you go past

/boot/efi. The EFI folder exists, you merely do not have

permissions to see it as a regular user.

sudo cp -r /<mount point>/EFI/Microsoft /boot/efi/EFI

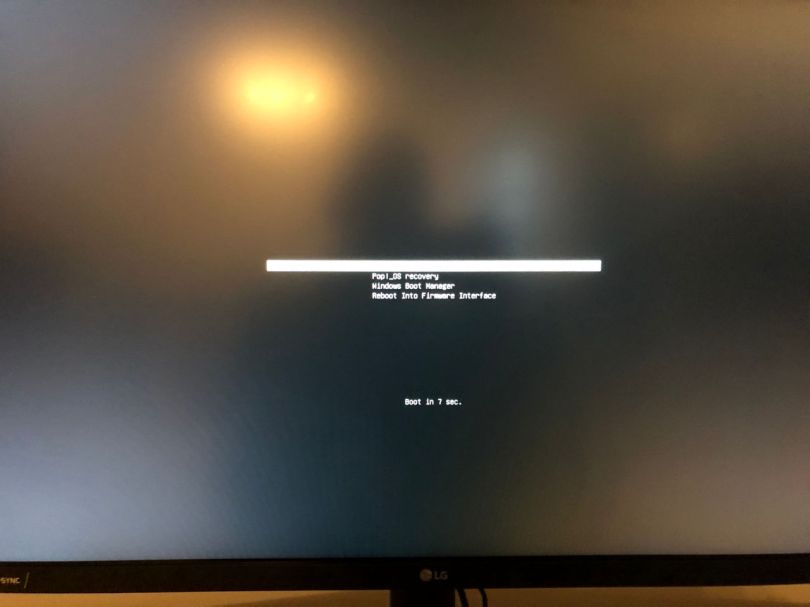

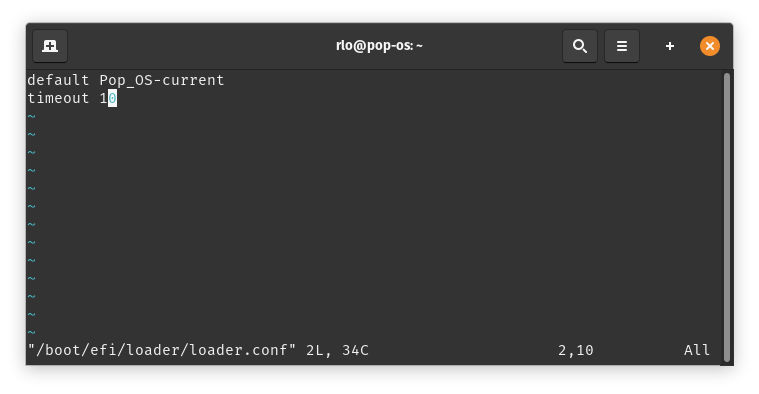

The last step consists of making the boot menu show up so you can

actually select an entry. Edit loader.conf and add “timeout 10”

(or any amount of seconds you prefer).

sudo vim /boot/efi/loader/loader.conf

All you need to do now is reboot and (hopefully) enjoy a boot menu with your Pop!_OS and Windows 10 boot entries. I do not know if this procedure also works with other Linux variants. It might for the Ubuntu based distributions, but I cannot say.